Managing AWS AutoScaling using Terraform

Amazon Web Services (AWS) Auto Scaling is a cloud service used to monitor applications and automatically adjusts capacity to maintain stable performance while keeping cloud costs low. AWS AutoScaling supports various AWS services, including Amazon EC2 Instances, Amazon ECS tasks, Amazon DynamoDB, and Amazon Aurora. In addition, it integrates with Amazon CloudWatch to monitor the application workloads and scale according to the application’s real-time metrics. This article focuses on managing AWS AutoScaling for Amazon EC2 instances using Terraform.

Table of contents

Prerequisites

To start managing any AWS services, including AWS AutoScaling using Terraform, you need to install Terraform on your machine and set up access to your AWS account using the AWS access key.

Alternatively, you can set up and launch a Cloud9 IDE Instance.

Project Structure

This article comprises four sections that include managing AWS AutoScaling groups, policies, schedules, notifications, lifecycle hooks, and Terraform attachments. Each section of this article has an example that you can execute independently. You can put every example in a separate Terraform *.tf file to achieve the results shown in this article.

Some of the examples in this article are based on open-source Terraform modules. These modules are used to hide and encapsulate the implementation logic of your Terraform code into a reusable resource. You can reuse modules in multiple places of your Terraform project without repeatedly duplicating lots of Terraform code.

Note: you can find every open-source Terraform module code on GitHub. For example, here’s a source code of the terraform-aws-modules/autoscaling/aws/latest.

All Terraform files are in the same folder and belong to the same Terraform state file.

Make sure to use commands to avoid unnecessary errors while following the article:

terraform validateto verify your Terraform HCL fileterraform planto check out the desired changes on every Terraform file creationterraform applyto create the resources in AWS

AWS Terraform provider

To start managing the AWS CloudWatch service, you need to declare the AWS Terraform provider in a providers.tf file.

terraform {

required_providers {

aws = {

source = "hashicorp/aws"

version = "3.69.0"

}

}

}

provider "aws" {

profile = "default"

region = "us-east-2"

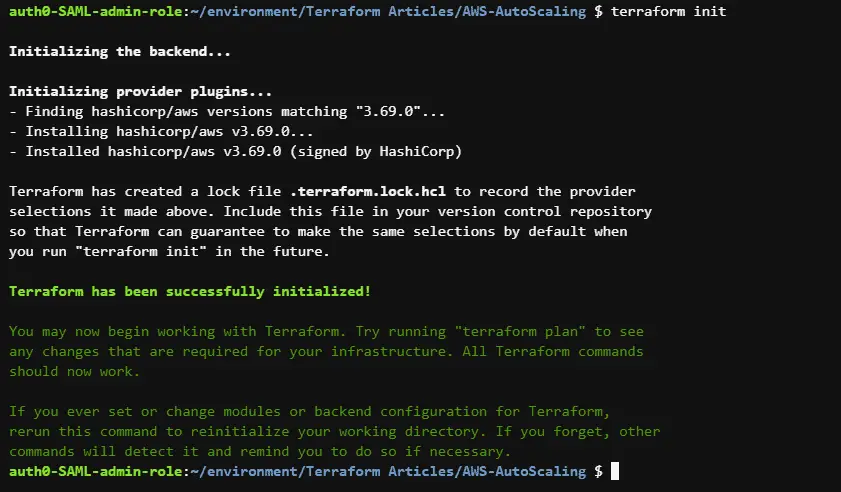

}Run the terraform init command to initialize the Terraform working directory with the AWS plugins.

Here is the execution output.

Managing AWS AutoScaling groups using Terraform

This section of the article will cover how to manage AWS AutoScaling groups using Terraform.

Create AutoScaling group

To create an AWS Autoscaling group, you can use the aws_autoscaling_group resource and pass the required arguments such as the max_size and min_size of the Autoscaling group. The aws_launch_template resource defines the configuration of the EC2 Instances that you wish to have within’ your Autoscaling group.

resource "aws_launch_template" "webservers" {

name_prefix = "webservers"

image_id = "ami-0c096ca5a3fbca310"

instance_type = "t2.micro"

}

resource "aws_autoscaling_group" "webservers" {

availability_zones = ["us-east-2a"]

desired_capacity = 1

max_size = 3

min_size = 1

launch_template {

id = aws_launch_template.webservers.id

version = "$Latest"

}

}Alternatively, you can create an AWS AutoScaling group using the official Terraform AWS AutoScaling module.

module "asg" {

source = "terraform-aws-modules/autoscaling/aws"

image_id = "ami-0c096ca5a3fbca310"

instance_type = "t2.micro"

name = "webservers-asg"

health_check_type = "EC2"

availability_zones = ["us-east-2a"]

desired_capacity = 1

max_size = 3

min_size = 1

}Create an AutoScaling launch configuration

An AWS AutoScaling launch configuration is an EC2 launch configuration template containing EC2 instance configurations that an AutoScaling group uses to launch instances. To create an AutoScaling launch configuration, use the aws_launch_configuration resource and assign the required arguments, such as the image_id and instance_type.

data "aws_ami" "amazon_linux" {

most_recent = true

filter {

name = "name"

values = ["amzn2-ami-hvm-2.0.20220406.1-x86_64-gp2"]

}

filter {

name = "virtualization-type"

values = ["hvm"]

}

owners = ["137112412989"] # Canonical

}

resource "aws_launch_configuration" "nginxserver" {

name_prefix = "nginxserver_launch_config"

image_id = data.aws_ami.amazon_linux.id

instance_type = "t2.micro"

lifecycle {

create_before_destroy = true

}

}

resource "aws_autoscaling_group" "nginxserver" {

name = "nginxserver_asg"

availability_zones = ["us-east-2a"]

launch_configuration = aws_launch_configuration.nginxserver.name

min_size = 1

max_size = 2

lifecycle {

create_before_destroy = true

}

}Create an AWS AutoScaling policy

AWS Autoscaling policies define the strategy and values that an AutoScaling group should use when scaling up the number of instances as needed by an application and scaling down as required. To create an AWS Autoscaling policy using Terraform, use the aws_autoscaling_policy resource and assign some of the needed arguments, such as the name of the Autoscaling policy and autoscaling_group_name

resource "aws_autoscaling_policy" "simple_scaling" {

name = "simple_scaling_policy"

scaling_adjustment = 3

policy_type = "SimpleScaling"

adjustment_type = "ChangeInCapacity"

cooldown = 100

autoscaling_group_name = aws_autoscaling_group.appserver.name

}

resource "aws_autoscaling_group" "appserver" {

availability_zones = ["us-east-2a"]

name = "appserver"

max_size = 5

min_size = 2

health_check_grace_period = 100

health_check_type = "ELB"

force_delete = true

launch_configuration = aws_launch_configuration.appserver.name

}

resource "aws_launch_configuration" "appserver" {

name_prefix = "nginxserver_launch_config"

image_id = data.aws_ami.amazon_linux.id

instance_type = "t2.micro"

lifecycle {

create_before_destroy = true

}

}Create an AWS AutoScaling schedule

An AWS Autoscaling schedule is used to scale up instances according to a predefined schedule. To create an AWS Autoscaling schedule using Terraform, you can use the aws_autoscaling_schedule resource and assign the required arguments, such as the autoscaling_group_name and scheduled_action_name

resource "aws_autoscaling_group" "batchprocess_server" {

availability_zones = ["us-east-2a"]

name = "batchprocess_server_asg"

max_size = 4

min_size = 1

health_check_grace_period = 100

health_check_type = "ELB"

force_delete = true

termination_policies = ["OldestInstance"]

launch_template {

id = aws_launch_template.batchprocess_servers.id

version = "$Latest"

}

}

resource "aws_autoscaling_schedule" "batchprocess_server_autoscaling_schedule" {

scheduled_action_name = "batchprocess_server_autoscaling_schedule"

min_size = 0

max_size = 4

desired_capacity = 0

start_time = "2022-04-20T18:00:00Z"

end_time = "2022-04-22T06:00:00Z"

autoscaling_group_name = aws_autoscaling_group.batchprocess_server.name

}

resource "aws_launch_template" "batchprocess_servers" {

name_prefix = "batchprocess_servers"

image_id = "ami-0c096ca5a3fbca310"

instance_type = "t2.micro"

}Create an AutoScaling group notification

An Autoscaling group notification lets you receive notifications whenever a specific event happens to the EC2 Instances within’ your autoscaling groups. To create an AWS Autoscaling group notification, you can use the aws_autoscaling_notification resource and assign the required arguments, such as the group_names, notifications, and topic_arn

resource "aws_autoscaling_notification" "webserver_asg_notifications" {

group_names = [

aws_autoscaling_group.webserver_asg.name,

]

notifications = [

"autoscaling:EC2_INSTANCE_LAUNCH",

"autoscaling:EC2_INSTANCE_TERMINATE",

"autoscaling:EC2_INSTANCE_LAUNCH_ERROR",

"autoscaling:EC2_INSTANCE_TERMINATE_ERROR",

]

topic_arn = aws_sns_topic.webserver_topic.arn

}

resource "aws_sns_topic" "webserver_topic" {

name = "webserver_topic"

}

resource "aws_autoscaling_group" "webserver_asg" {

name = "webserver_asg"

availability_zones = ["us-east-2a"]

desired_capacity = 1

max_size = 3

min_size = 1

launch_template {

id = aws_launch_template.webserver.id

version = "$Latest"

}

}

resource "aws_launch_template" "webserver" {

name_prefix = "webserver"

image_id = "ami-0c096ca5a3fbca310"

instance_type = "t2.micro"

}

Create an AutoScaling lifecycle hook

AutoScaling lifecycle hooks create awareness of the events in an AutoScaling instance’s lifecycle and then perform specified actions corresponding to lifecycle events, such as running custom scripts during or terminating an instance. To create an AWS AutoSacling lifecycle hook, you can use the aws_autoscaling_lifecycle_hook resource and assign the required arguments, such as the name, autoscaling_group_name and lifecycle_transition

resource "aws_autoscaling_group" "batch_app_server" {

availability_zones = ["us-east-2a"]

name = "batch_app_server"

max_size = 3

min_size = 1

health_check_type = "EC2"

termination_policies = ["OldestInstance"]

launch_template {

id = aws_launch_template.batch_app_server.id

version = "$Latest"

}

}

resource "aws_autoscaling_lifecycle_hook" "batch_app_server_lifecycle_hook" {

name = "batch_app_server_lifecycle_hook"

autoscaling_group_name = aws_autoscaling_group.batch_app_server.name

default_result = "CONTINUE"

heartbeat_timeout = 100

lifecycle_transition = "autoscaling:EC2_INSTANCE_LAUNCHING"

}

resource "aws_launch_template" "batch_app_server" {

name_prefix = "batch_app_server"

image_id = "ami-0c096ca5a3fbca310"

instance_type = "t2.micro"

}Create an AutoScaling attachment

To create an AWS AutoScaling attachment to an Elastic Load Balancer (ELB) or Application Load Balancer (ALB), you can use the aws_autoscaling_attachment resource and assign the required arguments, such as the autoscaling_group_name

resource "aws_autoscaling_attachment" "webservers_asg_attachment" {

autoscaling_group_name = aws_autoscaling_group.webservers.id

elb = aws_elb.webservers_loadbalancer.id

}

resource "aws_launch_template" "webservers" {

name_prefix = "webservers"

image_id = "ami-0c096ca5a3fbca310"

instance_type = "t2.micro"

}

resource "aws_autoscaling_group" "webservers" {

availability_zones = ["us-east-2a"]

desired_capacity = 1

max_size = 3

min_size = 1

launch_template {

id = aws_launch_template.webservers.id

version = "$Latest"

}

depends_on = [

aws_elb.webservers_loadbalancer

]

}

resource "aws_elb" "webservers_loadbalancer" {

name = "webservers-loadbalancer"

availability_zones = ["us-east-2a", "us-east-2b"]

listener {

instance_port = 8080

instance_protocol = "http"

lb_port = 80

lb_protocol = "http"

}

health_check {

healthy_threshold = 2

unhealthy_threshold = 2

timeout = 3

target = "HTTP:8080/"

interval = 30

}

}Summary

Amazon Web Services (AWS) Auto Scaling is a cloud service that monitors applications and automatically adjusts capacity to maintain stable performance while keeping cloud costs low. This article covered how to use Terraform to automate AWS AutoScaling for EC2 Instances.